This computer program is teaching itself how to destroy humans ... at a millennia-old Chinese board game

A computer program called AlphaGo Zero taught itself how to play a strategic game that is far more difficult than chess, Google found in a recent study, a feat both impressive and a little frightening.

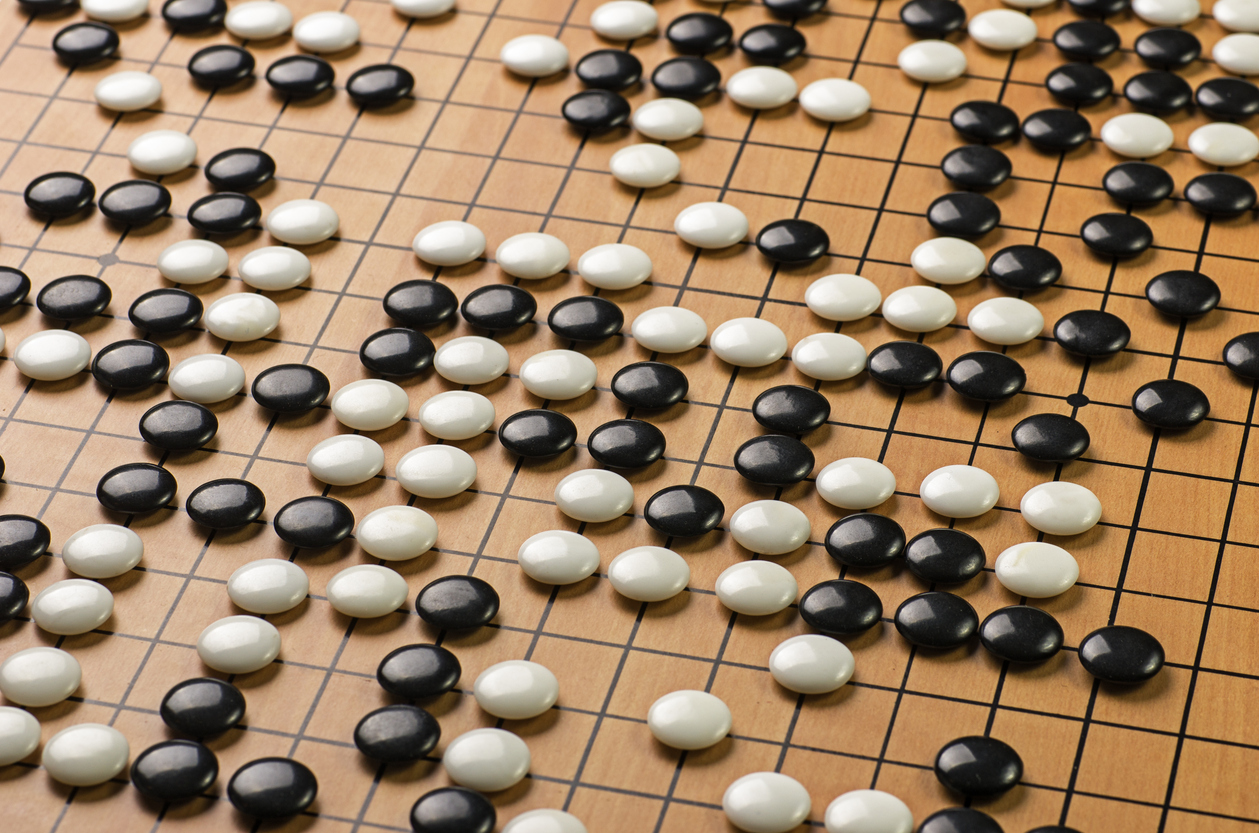

Go is an East Asian strategy game, played on a 19-by-19 grid that has unlimited configurations, NPR explains. Researchers at Google's DeepMind lab developed a program last year using databases of known human configurations for the game, which went on to beat the best Go player on the planet, world champion Lee Sedol.

This time around, researchers at DeepMind tried something new. Instead of teaching the program, then called AlphaGo, known human configurations for the game, they let the machine discover them itself, NPR says. Scientists found that the use of human knowledge could actually impose limits on their program, and that starting with a blank slate was much more effective.

The Week

Escape your echo chamber. Get the facts behind the news, plus analysis from multiple perspectives.

Sign up for The Week's Free Newsletters

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

The resulting updated program — dubbed AlphaGo Zero — spent three days playing 4.9 million games of Go against itself. Then, AlphaGo Zero beat the original human knowledge-based version 100 games to 0. AlphaGo Zero also discovered configurations that humans have not yet figured out.

While AlphaGo Zero has some far-reaching implications for the future, it remains to be studied whether this "blank slate" concept may be applied to solving complex problems. Read the full published study at Nature, or a more about the story of AlphaGo Zero at NPR.

A free daily email with the biggest news stories of the day – and the best features from TheWeek.com

Elianna Spitzer is a rising junior at Brandeis University, majoring in Politics and American Studies. She is also a news editor and writer at The Brandeis Hoot. When she is not covering campus news, Elianna can be found arguing legal cases with her mock trial team.q