The U.S. military is testing missiles that can steer themselves with artificial intelligence

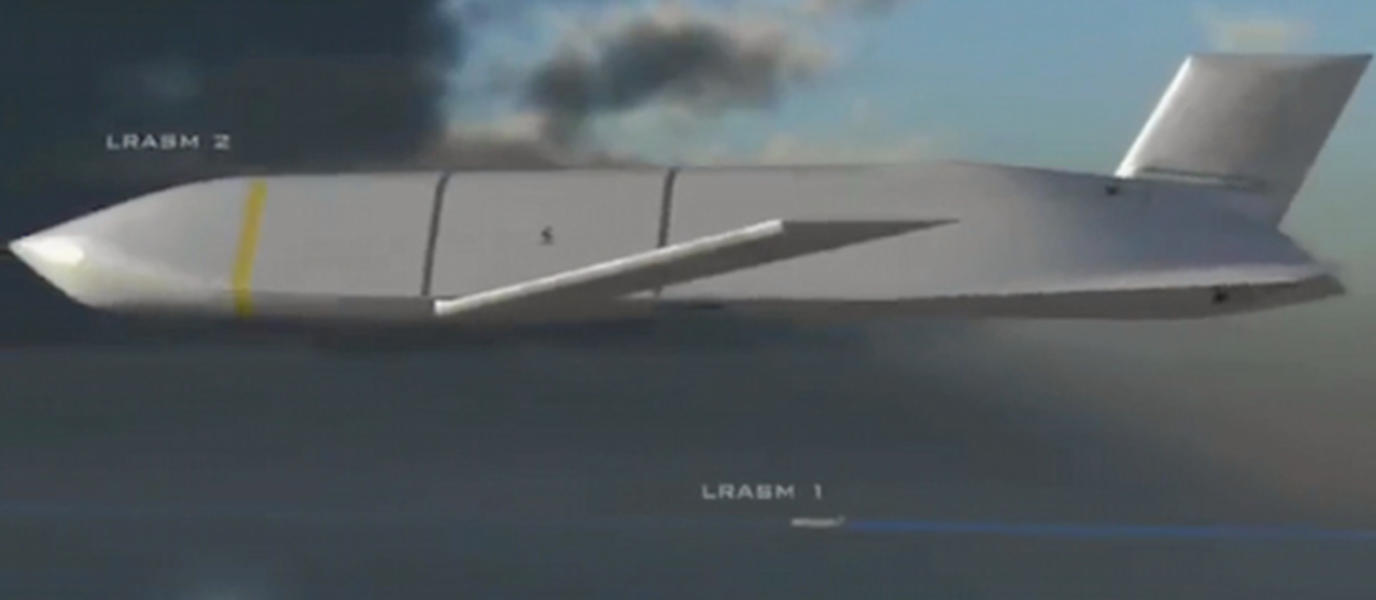

The Air Force and the Navy, in collaboration with weapons manufacturer Lockheed Martin, are testing missiles that can steer themselves and choose their own targets using a form of artificial intelligence, according to an eye-opening report from The New York Times. U.S. officials deny that the missiles are so-called "autonomous weapons" — i.e. free of human guidance and control — but experts disagree.

The report sheds light on the growing autonomous weapons industry, which could change warfare as we know it. From the Times:

But now, some scientists say, arms makers have crossed into troubling territory: They are developing weapons that rely on artificial intelligence, not human instruction, to decide what to target and whom to kill.

As these weapons become smarter and nimbler, critics fear they will become increasingly difficult for humans to control — or to defend against. And while pinpoint accuracy could save civilian lives, critics fear weapons without human oversight could make war more likely, as easy as flipping a switch. [The New York Times]

An international summit to be held later this week in Geneva is to determine whether the production of such weapons should be curbed under the Convention on Certain Conventional Weapons.

The Week

Escape your echo chamber. Get the facts behind the news, plus analysis from multiple perspectives.

Sign up for The Week's Free Newsletters

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

To which we will say just two words: Sky. Net.

A free daily email with the biggest news stories of the day – and the best features from TheWeek.com

Ryu Spaeth is deputy editor at TheWeek.com. Follow him on Twitter.