What's really limiting advances in computer tech

Let's talk about voltage scales

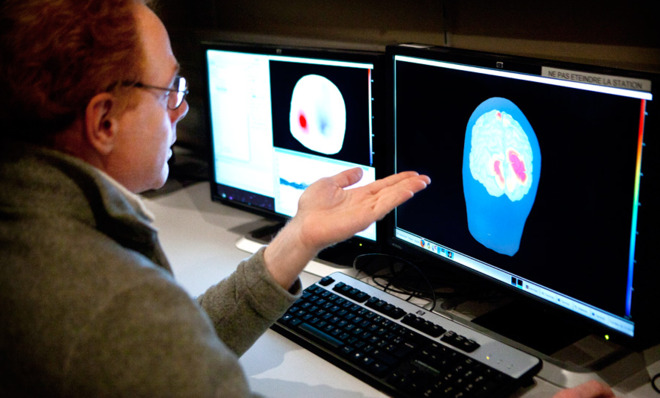

How does a biologist, or a computational neuroscientist, possibly have the wherewithal to stay current on all aspects of his field?

Nature, one of the world's top journals for peer-reviewed scientific breakthroughs, does what it can to encourage cross-discipline knowledge sharing by publishing non-technical essays from the leading lights in particular fields. For a lay person, this is often the best way to become current, very quickly, on very difficult subjects.

This week's topic, when boiled down to its essence, is: how small, how fast, how powerful can computers possibly get? The writer who answers these questions is Igor Markov, a professor of electrical engineering and computer science at the University of Michigan. I made my way through his prose rather slowly. It's quite dense because he packs an incredible amount of detail into an essay that spans only seven printed pages. When it was done, I had unlearned a number of basic beliefs I'd had about computers.

The Week

Escape your echo chamber. Get the facts behind the news, plus analysis from multiple perspectives.

Sign up for The Week's Free Newsletters

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

His conclusions, reflecting the current state of computer science, are illuminating for anyone who wants to figure out where the world is going, so I thought I'd share some of them. (This is dense stuff, so I may get some of the technical language a bit wrong. Please forgive me in advance.)

1. Quantum computing is probably not going to be as revolutionary as the popular press would have us believe. Such devices "hold promise only in niche applications and do not offer faster general-purpose computing because they are no faster for sorting and other specific tasks." He makes this analogy: the field of computer science is a decathlon, but quantum computing can only make the sprint much faster. Two examples he uses: searching the web and computer-aided design require computation technology that non-quantum digital computers can do as well as, if not better than, quantum computers. Quantum effects, like entanglement, which is useful for hard-to-break codes, remain subject to the laws of the universe.

Here's something else I didn't know:

2. The relationship between an integrated circuit and the power it consumes is all out of whack. This is known as the "voltage scale" problem. Markov writes: "Power consumption of transistors available in modern integrated circuits reduces more slowly than their size."

A free daily email with the biggest news stories of the day – and the best features from TheWeek.com

The one "law" that most of us know about these chips was laid down by Gordon Moore, a founder of Intel, who predicted that the number of transistors in a circuit would basically double every 18 months. So if Moore's law holds — and so far, it has, because the entire industry has adopted it as a target — there emerges a very large imbalance between the size of the chip and the amount of power it needs to work. The chips are getting tinier; the power they use gets tinier more slowly. What this means, practically, is that power becomes a limiting factor in reducing the size of solid state circuits. Moore's law might soon be "broken" because, beyond a certain point, the tinier chips will require too much power to operate, but the precise level of power cannot be harnessed to power those transistors without damaging them, because the amount of energy involved in this transaction is too high. In English, your computer will get too hot. Scientists haven't figured out how to cool it properly. (And data centers already consume 2 percent of all energy used in the U.S.)

A big corollary:

3. Only a tiny fraction of a circuit can operate at once, because switching circuits create heat that modern computers can only dissipate if most of the rest of the chip is "dark." The higher performance the chip your computer is using, the less of the chip the computer is able to use to compute. And since tiny signals are so weak, "gates" or junctures in the circuits are built much like radio repeaters; they take the frequency and amplify it. This development has mammoth ramifications for the field.

4. Energy efficiency is a casualty of speed. Related to this is our need for speed. We love fast computers. But there are walls, here, too, and energy efficiency is often a casualty. Computers, reduced to their essence, rely on controlling and then translating the frequencies at which waves of crystals (like quartz crystals) vibrate. The quicker the vibration, the weaker the signal — this is a function of the speed at which electricity travels through wires in the transistor. The smaller the chip, the smaller and less dense the wire; the less dense and smaller the wire, the higher the "delay" in transmitting the signal. So if the crystal vibrates too quickly, the signal can't make it to the end of the transistor without being repeated. Repeaters cost energy, too. Computer manufacturers have stopped simply trying to make processing faster, and have turned instead to a temporary solution: they just add another CPU to the hardware. (This is the "dual core" or "quad core" that the blue shirts at the Apple store talk about.) The "work" here can be distributed across different cores, which means that things can get quickly without bumping up against the energy/physical problems generated by a really fast computer.

You would be correct in assuming that Silicon Valley is not going to let alleged physical constraints to technology get in their way; there are all types of potential ways to mitigate some of the problems that crop up at tiny scales, and billions upon billions of dollars are being spent on this basic research. As Ars Technica's John Trimmer writes:

[O]ne comes away with the sense that the greatest limitation we face is human cleverness. Although there are no technologies on the horizon that Markov seems to be especially excited about, he's also clearly optimistic that we can either find creative ways around existing roadblocks or push progress in other areas to such an extent that the roadblocks seem less important. The thing about these creative solutions is that they're hard to recognize until they're actually underway. [Ars Technica]

Marc Ambinder is TheWeek.com's editor-at-large. He is the author, with D.B. Grady, of The Command and Deep State: Inside the Government Secrecy Industry. Marc is also a contributing editor for The Atlantic and GQ. Formerly, he served as White House correspondent for National Journal, chief political consultant for CBS News, and politics editor at The Atlantic. Marc is a 2001 graduate of Harvard. He is married to Michael Park, a corporate strategy consultant, and lives in Los Angeles.

-

Why is this government shutdown so consequential?

Why is this government shutdown so consequential?Today's Big Question Federal employee layoffs could be in the thousands

-

Lavender marriage grows in generational appeal

Lavender marriage grows in generational appealIn the spotlight Millennials and Gen Z are embracing these unions to combat financial uncertainty and the rollback of LGBTQ+ rights

-

The 5 best zombie TV shows of all time

The 5 best zombie TV shows of all timeThe Week Recommends For undead aficionados, the age of abundance has truly arrived

-

How do you solve a problem like Facebook?

How do you solve a problem like Facebook?The Explainer The social media giant is under intense scrutiny. But can it be reined in?

-

Microsoft's big bid for Gen Z

Microsoft's big bid for Gen ZThe Explainer Why the software giant wants to buy TikTok

-

Apple is about to start making laptops a lot more like phones

Apple is about to start making laptops a lot more like phonesThe Explainer A whole new era in the world of Mac

-

Why are calendar apps so awful?

The Explainer Honestly it's a wonder we manage to schedule anything at all

-

Tesla's stock price has skyrocketed. Is there a catch?

Tesla's stock price has skyrocketed. Is there a catch?The Explainer The oddball story behind the electric car company's rapid turnaround

-

How robocalls became America's most prevalent crime

How robocalls became America's most prevalent crimeThe Explainer Today, half of all phone calls are automated scams. Here's everything you need to know.

-

Google's uncertain future

Google's uncertain futureThe Explainer As Larry Page and Sergey Brin officially step down, the company is at a crossroads

-

Can Apple make VR mainstream?

Can Apple make VR mainstream?The Explainer What to think of the company's foray into augmented reality