How quantum computing could change everything

It could revolutionize encryption — and help us peer even deeper into the underlying fabric of reality

The trend in computing for decades has been packing more power into smaller spaces — your smartphone, after all, is leagues ahead of the network of computers that sent Apollo 11 to the moon and back. But the next wave of computers, for some dreamers, gets really, really small, down to the quantum level. A quantum computer, essentially, is a way to harness quantum mechanics to process information. Its fundamental unit is called the qubit, analogous to the bit in conventional computers.

What's it made of?

A bit in an ordinary computer records one of two states, which we usually think of as 0 or 1. In some of the earliest computers, a bit was recorded as either a hole or no hole, in a paper punch card or in a paper tape. As computers got more advanced, the ways to represent bits changed: You could translate a 0 or 1 from the "open" or "closed" position of an electrical relay, the magnetic polarity of a strip of film, the presence or absence of a tiny pit on a disc that is read by a laser, or two different levels of electric charge.

The Week

Escape your echo chamber. Get the facts behind the news, plus analysis from multiple perspectives.

Sign up for The Week's Free Newsletters

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

(More from World Science Festival: The biochemistry of autumn colors)

A qubit, on the other hand, takes advantage of some of the quirky features of quantum mechanics. Since particles can exist in a superposition of states, a qubit is a mixture of both 0 and 1 at the same time. And if you could link qubits together, you could take advantage of quantum entanglement, also known as "spooky action at a distance," the ability of particles to instantaneously influence each other no matter how far apart they are.

What's the use?

So, what's the usefulness of all these odd features of quantum computing? Well, thanks to those quantum mechanical quirks, a quantum computer could crunch complicated calculations much quicker than the fastest computers today. Because the qubit exists in a superposition of one and zero, rather than one or the other, it can use ones, zeroes, and the superposition of both. By being able to encode multiple possibilities in its fundamental units, the quantum computer should be able to tackle problems beyond the reach of normal computers, like quickly calculating the factors (all the numbers that can be multiplied together to create another number — the factors of 12 are 1, 2, 3, 4, 6, and 12) of very large numbers.

A free daily email with the biggest news stories of the day – and the best features from TheWeek.com

Calculating factors might not seem like a big deal — until you realize that factoring plays a huge role in encryption. Theoretically, a quantum computer could be the key to taking away the protections that are used to keep credit card numbers secret in online shopping or to create untraceable email addresses for whistleblowers.

"That is the 'killer app' in quantum computing: Stuff like factoring numbers, breaking code — basically being a big pain in the butt to the places with three letters…NSA, CIA, et cetera," says MIT mechanical engineer Seth Lloyd (who sparred with Carnegie Mellon University computer scientist Edward Fredkin at the 2011 World Science Festival program "Rebooting the Cosmos" over whether any useful quantum computer could ever be made.)

(More from World Science Festival: Climate change is opening sea route to Asia that Columbus was looking for)

Just as quantum computers could allow for someone to easily bypass existing encryption methods, the technology could allow people to encrypt information in new and even more secure ways. And codes aren't the only use for quantum computers: We could use them to peer even deeper into the underlying fabric of reality.

"The bottom of the world is quantum mechanical — is digital," Lloyd says. "In my mind, the most important application of quantum computing is understanding the fundamentally digital nature of the universe."

Have we made one yet?

Scientists have already built some collections of qubits for use in experiments, but a lot of work remains to be done to make truly useful quantum computers. Researchers at IBM are making qubits from superconducting metal circuits, but are encountering high error rates. "Entanglement is necessary for quantum computing, but can also lead to errors when it occurs between the quantum computer and the environment (i.e. anything that is not the computer itself)," IBM researcher Jerry Chow wrote in a recent blog post. "Quantum effects disappear when the system entangles too strongly to the external world, which makes quantum states very fragile. Yet, there is a kind of tension, since the quantum computer must be coupled to the external world so the user can run programs on it and read the output from those programs."

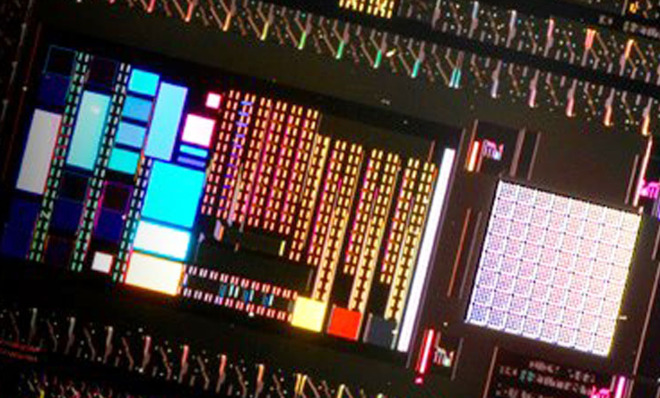

Canadian company D-Wave Systems has already developed and sold quantum processors like the D-Wave Two, specifically designed for "quantum annealing," a way to find the absolute minimum of a certain mathematical function. A D-Wave processor has qubits hard-wired into a circuit to perform a certain type of task; they aren't general-purpose machines. But some scientists have cast doubt on whether D-Wave's processors really act like quantum computers, or whether any quantum effects they do exhibit actually help to speed up the calculations; in one test, the D-Wave Two crunched numbers 10 times faster than a regular computer, but could also go 100 times slower, according to Physics World.

Microsoft is trying its hand at building another kind of qubit called a "topological qubit," made by manipulating particles called non-Abelian anyons so the paths they take form a braid (a really good detailed explanation of the physics behind can be found at Quanta magazine). Theoretically, the topological qubit would be more immune to the errors of other qubits, but one major problem remains: Scientists have yet to conclusively detect a non-Abelian anyon.

(More from World Science Festival: See how drought dries up the world)

Even when the physics and engineering kinks are worked out, quantum computers probably won't be landing on your desk anytime soon. The superconductors that power them need to be kept very cold (usually in a container that "looks like a gigantic beer keg," according to Lloyd). But with the awe-inspiring power (theoretically) lurking in these quantum machines, it's understandable that so many scientists would throw their weight into this seemingly quixotic quest.

"When you start thinking about quantum computing, you realize that you yourself are some kind of clunky chemical analog computer," Microsoft's Michael Freedman told the MIT Tech Review. There's nothing more humbling — or, perhaps, weirdly invigorating — than that sentiment.