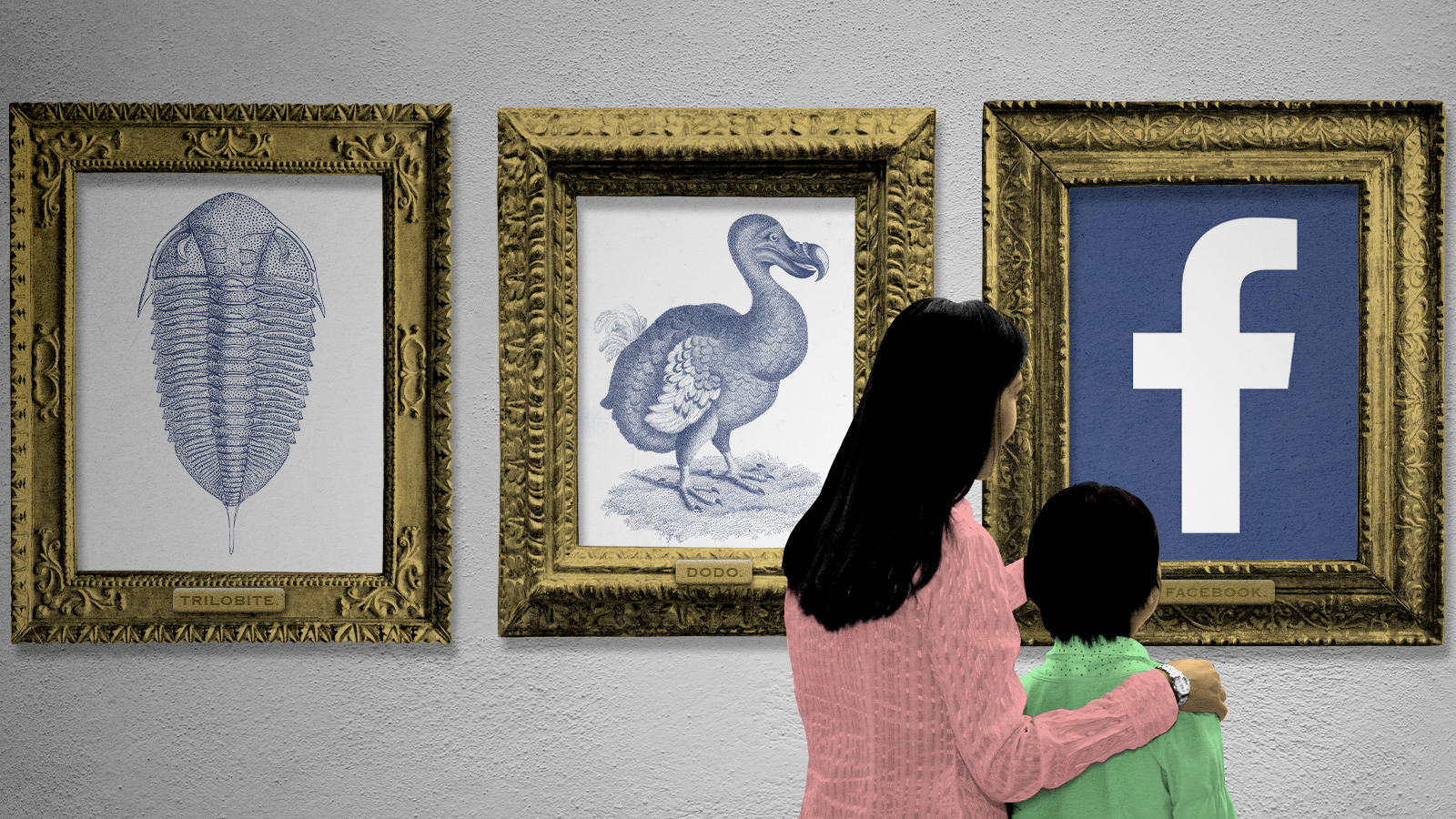

The sticky problem of making Facebook go away

How could we make the outage permanent?

For a few hours on Monday, billions of people across the globe experienced something extraordinary: They got a taste of a world without social media.

Well, not all of social media. Twitter was still there, and so was Snapchat, TikTok, Reddit, and lots of other platforms. But Facebook was gone, and so was Instagram, What's App, Messenger, and Oculus. It was a glimpse, for about five hours, of what it would be like to live without constant, instantaneous networked communication through the machines most of us carry around in our pockets and purses, and interact with at work, at home, and everywhere else we go.

What if we decided to make it permanent — to greatly curtail or even eliminate these platforms from our lives and societies? Many of us probably wouldn't want to. Others may think doing so would be an improvement but doubt we have the will or the means to make it happen. But I suspect many others would welcome the chance to quiet the digital din in which we now live out our lives, if only we could figure out how.

The Week

Escape your echo chamber. Get the facts behind the news, plus analysis from multiple perspectives.

Sign up for The Week's Free Newsletters

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

Monday's tech glitch — apparently caused by "configuration changes on the backbone routers that coordinate network traffic between our data centers," as Facebook put it in a statement published later that day — coincided exactly with the latest round of toxic press for Facebook. Whistleblower Frances Haugen has been testifying before Congress about how, in her capacity as a data scientist at the company, she encountered abundant evidence that Facebook knew that its products and services caused political harm to democracies (through the spreading of misinformation) and emotional harm to teenagers (by encouraging them to share airbrushed images of themselves and compete with each other for "likes" and comments). The company knew about the harm and yet did nothing to change because its entire business model is built on users doing the things that cause the harm.

That would seem to point toward the need for much more sweeping government regulation of Facebook and possibly other social media companies as well. But how? Is social media like a business that provides a vital product or service but produces negative externalities (like pollution) that can and should be controlled and regulated? Or are the negative externalities the entire point, making social media companies much more like drug dealers who turn users into addicts with few if any positive consequences? If the latter is closer to the truth, then we face the question of whether our approach to hard drugs — prohibition rather than regulation — also makes sense for social media.

Although increasing numbers of politicians like to make a show of bashing social media companies, it's unclear whether there's any serious will to act against them, at least in this country. The Federal Trade Commission's antitrust lawsuit against Facebook could potentially force it to sell off Instagram or other holdings, but it wouldn't force a change in the nature of the company's core business practices. The European Union is entertaining more sweeping regulations. But even they would stop far short of banning Facebook, which would probably prove unpopular, let alone trying to break it up, which would go far beyond the power of European regulators.

That's because Facebook is an American company. If it's going to be broken up, that's going to have to happen here. But would it? I'd say it's highly unlikely — and not only because Mark Zuckerberg could just pick up and move himself, his fortune, his expertise, his top employees, and (most crucially) his hugely marketable trove of information about his users' preferences to any country in the world willing to host his digital enterprise. After a rocky period of transition, most people would probably notice little difference in their experience on the platform with it operating from New Zealand, Singapore, or Mauritius.

A free daily email with the biggest news stories of the day – and the best features from TheWeek.com

Facebook is also unlikely to face the music because of our tendency to be persuaded by libertarian arguments that tell us the market will save us. Don't worry, we're told — if Facebook and other digital platforms really are bad for us, individuals will eventually choose to disengage from them, driving the companies into the ground or forcing them to change how they do business in order to survive. Allowing that process to run its course is much better than attempting to control it artificially using government power.

Too bad that in cases of addiction, the market is more the source of the problem than a solution to it. We choose to participate in these platforms because they warp our preferences by giving each of us a surge of dopamine whenever we receive a "like" in response to a photo or see our prejudices conformed by the stories that appear in our individually curated news feeds. Waiting for the market to punish a company in the business of giving us information ever-more perfectly tailored to our likes is like trying to quash the drug trade by telling heroin addicts to "Just Say No." It might make us feel like we're doing something useful, but it isn't going to work.

Some of government's most crucially important work involves trying to save us from ourselves, and each other. Regulating or breaking up social media companies would just be the latest example of that.

Which doesn't mean we'll do it right — or do it at all. Acting requires overcoming the same resistance that keeps the market from punishing Facebook all on its own. (People complain about these companies, but they also love to use their products.) And like everything these days, polarized partisanship has a way of standing in the way of addressing problems in an intelligent, holistic way. Instead, we get Democrats trying to force social media companies to do things they think will undercut support for their all-too-dangerous opponents and Republicans favoring a different set of responses to accomplish the reverse. It would be vastly better for officials from both parties to do the arduous work of thinking about how adjustments to social media could make public and private life in the 21st-century United States a little bit better overall.

At the moment, this doesn't seem especially likely. But that doesn't mean we shouldn't keep trying and hoping for more. As that work continues, remember how it felt during those fleeting hours when Facebook, Instagram, and the other platforms disappeared — and remember we have it in our power to move our world somewhat closer to that alternative reality.

Damon Linker is a senior correspondent at TheWeek.com. He is also a former contributing editor at The New Republic and the author of The Theocons and The Religious Test.