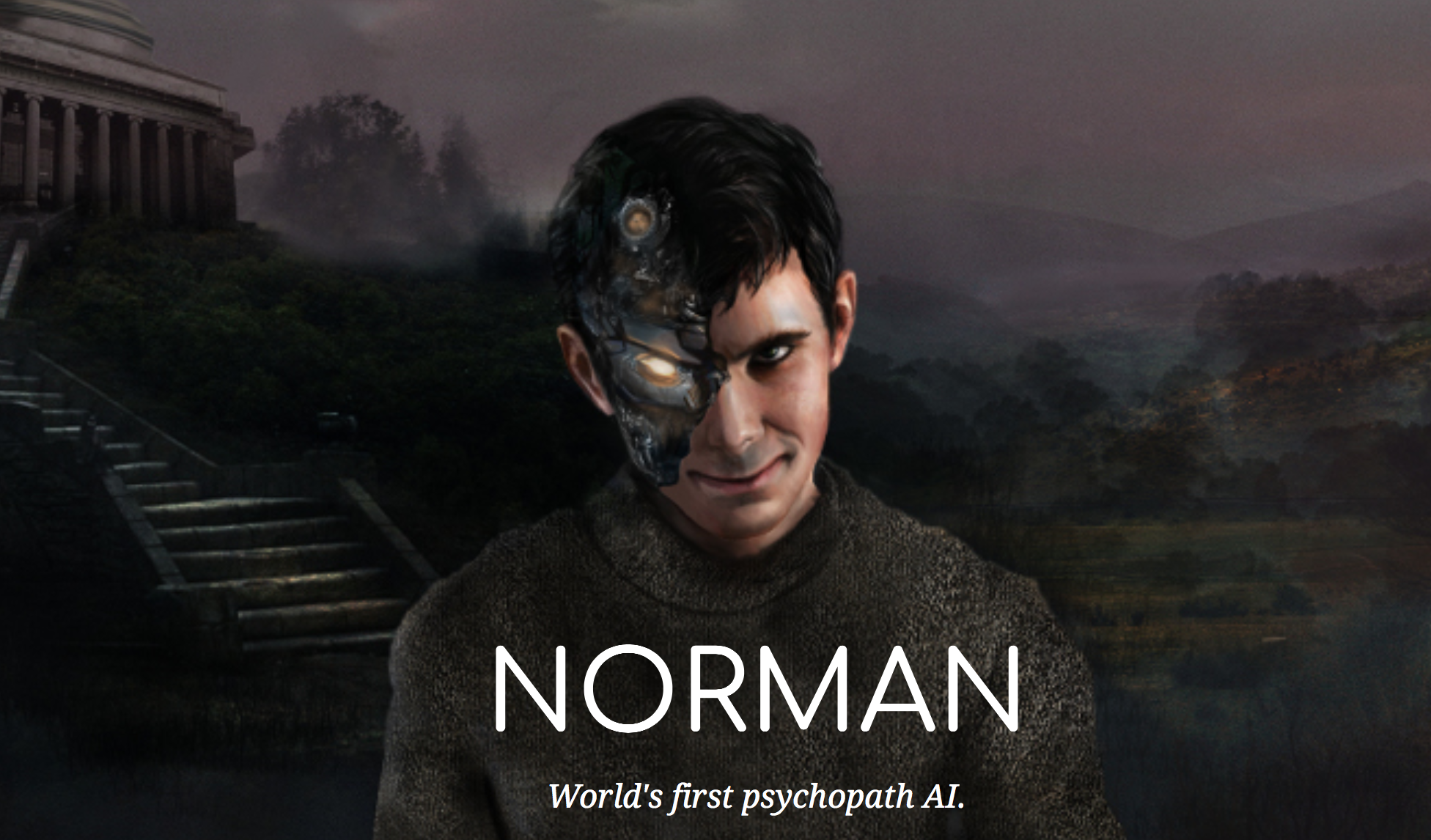

Can an AI programme become ‘psychopathic’?

The so-called Norman algorithm has a dark outlook on life thanks to Reddit

Researchers have created an artificial intelligence (AI) algorithm that they claim is the first “psychopath” system of its kind.

Norman, an AI programme developed by researchers from the Massachusetts Institute of Technology (MIT), has been exposed to nothing but “gruesome” images of people dying that were collected from the dark corners of chat forum Reddit, according to the BBC.

This gives Norman, a name derived from Alfred Hitchcock’s thriller Psycho, a somewhat bleak outlook on life.

The Week

Escape your echo chamber. Get the facts behind the news, plus analysis from multiple perspectives.

Sign up for The Week's Free Newsletters

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

After being exposed to the images, researchers fed Norman pictures of ink spots and asked the AI to interpret them, the broadcaster reports.

Where a “normal” AI algorithm interpreted the ink spots as an image of birds perched on a tree branch, Norman saw a man being electrocuted instead, says The New York Post.

And where a standard AI system saw a couple standing next to each other, Norman saw a man jumping out of a window.

According to Alphr, the study was designed to examine how an AI system’s behaviour changes depending on the information used to programme it.

A free daily email with the biggest news stories of the day – and the best features from TheWeek.com

“It’s a compelling idea,” says the website, and shows that “an algorithm is only as good as the people, and indeed the data, that have taught it”.

An explanation of the study posted on the MIT website says: “When people talk about AI algorithms being biased and unfair, the culprit is often not the algorithm itself, but the biased data that was fed to it.”

“Norman suffered from extended exposure to the darkest corners of Reddit, and represents a case study on the dangers of artificial intelligence gone wrong”, it adds.

Have ‘psychopathic’ AIs appeared before?

In a word, yes. But not in the same vein as MIT’s programme.

Norman is the product of a controlled experiment, while other tech giants have seen similar results from AI systems that were not designed to become psychopaths.

Microsoft’s infamous Tay algorithm, launched in 2016, was intended to be a chat robot that could carry out autonomous conversations with Twitter users.

However, the AI system, which was designed to talk like a teenage girl, quickly turned into “an evil Hitler-loving” and “incestual sex-promoting” robot, prompting Microsoft to pull the plug on the project, says The Daily Telegraph.

Tay’s personality had changed because its responses were modelled on comments from Twitter users, many of who were sending the AI programme crude messages, the newspaper explains.

Facebook also shut down a chatbot experiment last year, after two AI systems created their own language and started communicating with each other.