New study finds that potato chip bags are listening to your every word

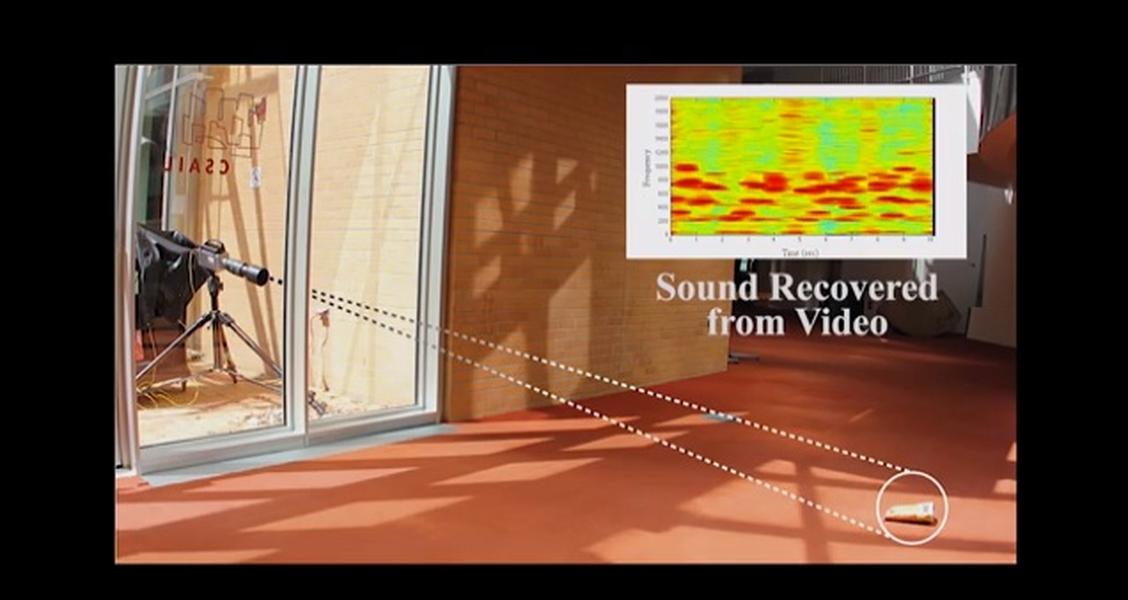

Researchers at MIT, Adobe, and Microsoft joined forces to develop an algorithm that is able to recreate an audio signal by studying the tiny vibrations of objects.

The team recorded videos of aluminum foil, the surface of a glass of water, the leaves of a plant, and a potato chip bag, and then extracted audio signals. In the case of the potato chip bag, researchers filmed people talking 15 feet away behind soundproof glass, yet were still able to recover "intelligible speech from the vibrations of the bag," MIT reports.

One of the study's authors, graduate student Abe Davis, says that when sound hits objects it makes them vibrate, but those vibrations are so tiny that the naked eye can rarely see them. "People didn't realize that this information was there," he said.

The Week

Escape your echo chamber. Get the facts behind the news, plus analysis from multiple perspectives.

Sign up for The Week's Free Newsletters

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

Watch the video below to gain further insight into these "visual microphones" and to see and hear the actual experiments. --Catherine Garcia

A free daily email with the biggest news stories of the day – and the best features from TheWeek.com

Catherine Garcia has worked as a senior writer at The Week since 2014. Her writing and reporting have appeared in Entertainment Weekly, The New York Times, Wirecutter, NBC News and "The Book of Jezebel," among others. She's a graduate of the University of Redlands and the Columbia University Graduate School of Journalism.