Scientists are using AI technology to translate thoughts into speech

Scientists are now using artificial intelligence to help people who cannot speak.

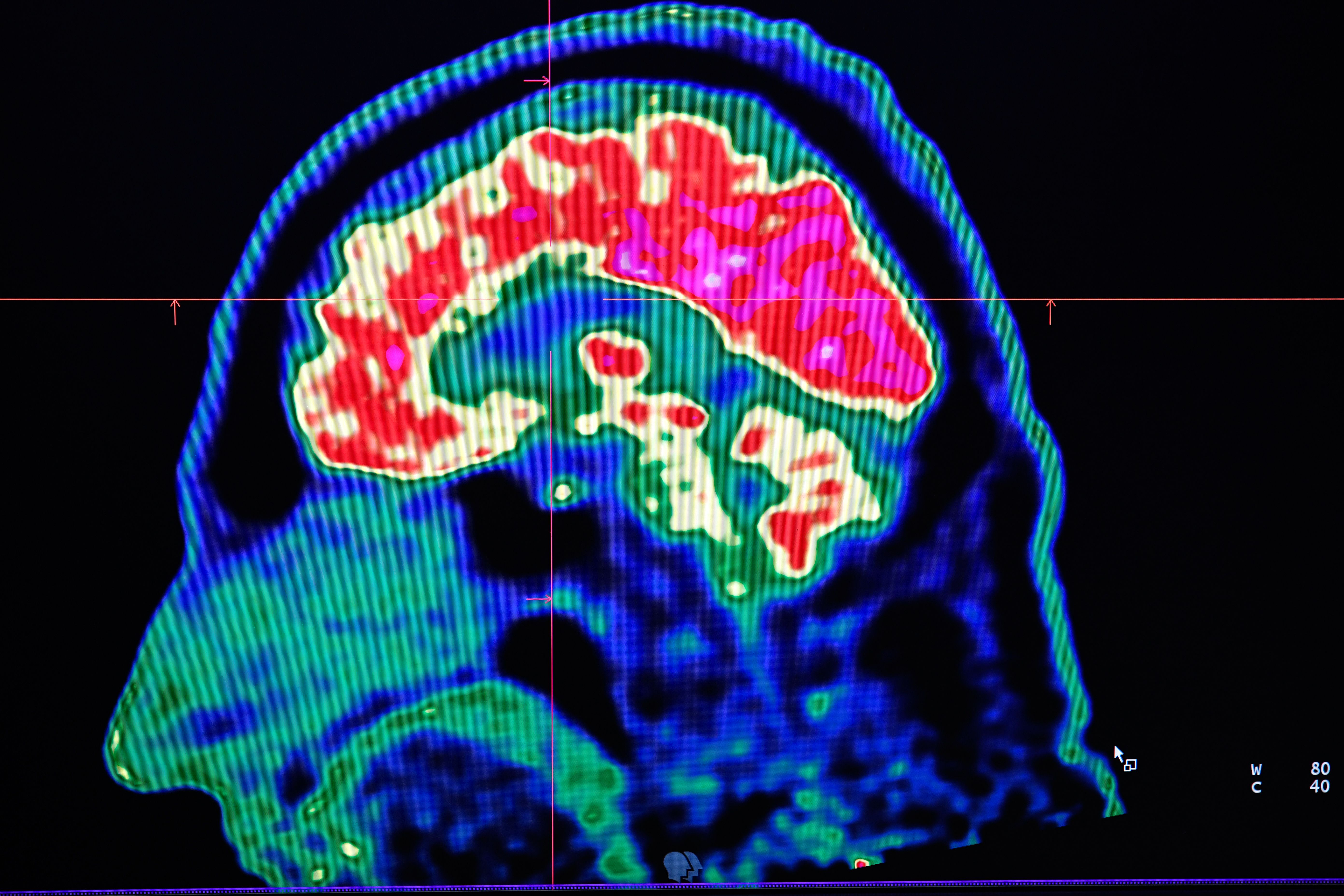

Researchers at Columbia University's Mortimer B. Zuckerman Mind Brain Behavior Institute are creating bots to translate brain signals into speech, per a study published Tuesday in Scientific Reports.

The researchers hope the AI technology can help people with speech disabilities, like someone recovering from a stroke, or someone with epilepsy or amyotrophic lateral sclerosis, a motor neuron disease that most notably affected the late Stephen Hawking.

The Week

Escape your echo chamber. Get the facts behind the news, plus analysis from multiple perspectives.

Sign up for The Week's Free Newsletters

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

Researchers are using a vocoder, a synthesizer that decodes speech after learning and listening to the speech patterns of humans, writes Gizmodo. Popular voice command systems like Apple's Siri and Amazon's Alexa also use this technology, says Nima Mesgarani, the paper's senior author. While the technology can't translate all of a person's imagined thoughts, it can translate patterns in brain signals to reconstruct words, rather than having a person select pre-programmed words from a system, as Hawking did.

"Our voices help connect us to our friends, family and the world around us, which is why losing the power of one's voice due to injury or disease is so devastating," said Mesgarani. "we have a potential way to restore that power. We've shown that, with the right technology, these people's thoughts could be decoded and understood by any listener."

In the study, patients listened to a person reading numbers 0-9 while researchers scanned their brains and the AI decoded it into speech. The AI software correctly picked up at least 75 percent of the patient's language, researchers found.

A free daily email with the biggest news stories of the day – and the best features from TheWeek.com