What can the ‘world’s largest computer chip’ actually do?

Cerebras Systems’ processor has 50,000 times as many cores as an iPhone chip

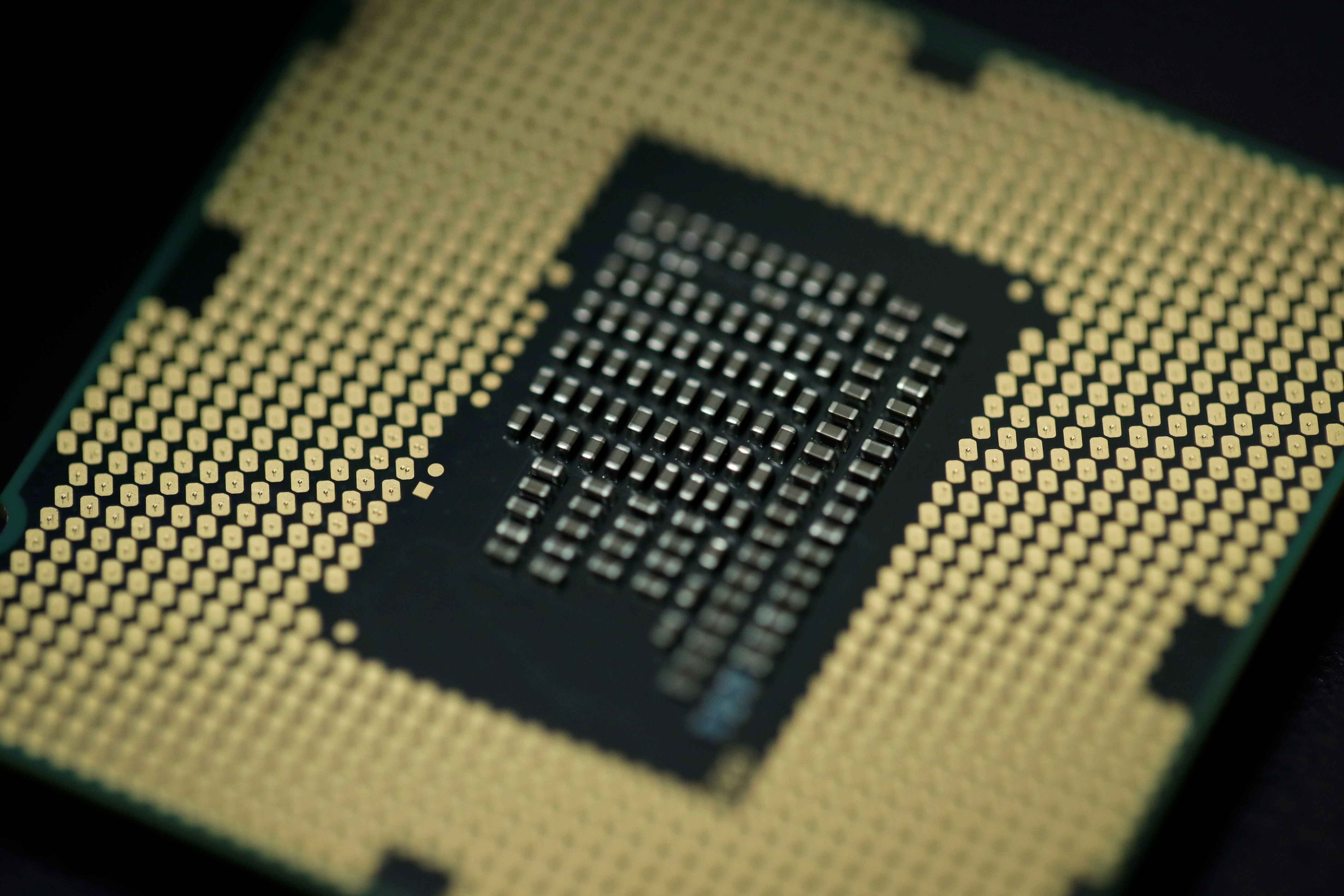

Californian tech start-up Cerebras Systems has unveiled what it claims is the world’s largest computer chip, which it hopes will be fundamental to the future of complex artificial intelligence (AI) systems.

Dubbed the “Wafer Scale Engine”, the chip is equipped with close to half a million processing cores and is larger than an iPad, the BBC reports.

It has been developed with speed and efficiency in mind to power the next generation of AI systems, from driverless cars to surveillance programs, the broadcaster adds.

The Week

Escape your echo chamber. Get the facts behind the news, plus analysis from multiple perspectives.

Sign up for The Week's Free Newsletters

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

From our morning news briefing to a weekly Good News Newsletter, get the best of The Week delivered directly to your inbox.

However, experts are wary that the chip’s size could make it an impractical choice for buyers looking to integrate the processor into their existing infrastructure.

How big is the chip?

Massive, if you compare it to a conventional computer processor.

The chips that power today’s smartphones and tablets are “smaller than a fingernail”, while the processors that drive “beefy devices” - such as cloud servers - “aren’t much bigger than a postage stamp”, Wired explains.

A free daily email with the biggest news stories of the day – and the best features from TheWeek.com

However, the Wafer Scale Engine, which the tech site describes as a “silicon monster”, is considerably larger than any computer processor found in today’s consumer devices.

The processor measures 46,225 square millimetres and consists of 1.2 trillion transistors, 18GB of “on-chip memory” and 400,000 processing cores, notes TechCrunch.

To put that into perspective, the A12 processor that powers the current range of iPhones has a footprint of 83.27 square millimetres and sports eight cores, according to TechInsights.

What can it do with all that power?

All that power may appeal to video gamers who value the very best graphics, but the Wafer Scale Engine has been developed squarely for the growing AI market.

According to the Financial Times, only a handful of tech companies, including Cerebras Systems, have taken on the challenge of developing a processor that’s capable of driving the power-intensive operation of “deep learning”.

The task essentially involves training an AI system, requiring thousands of data samples to be analysed, so that it may function with a level of autonomy.

It’s a “far more data-intensive job” than the task of “inference”, the process of applying AI systems to real-world products, which is currently being developed by “more than 50 companies” around the world, the FT notes.

What are the drawbacks?

Arguably the most significant drawback is the chip’s sheer size, which could raise concerns over its potential for mass adoption.

Speaking to the BBC, Dr Ian Cutress, editor of tech news site AnandTech, said: “One of the advantages of smaller computer chips is they use a lot less power and are easier to keep cool.

“When you start to deal with bigger chips like this, companies need specialist infrastructure to support them, which will limit who can use it practically.”

Given that AI is “where the big dollars are going at the moment”, Cutress predicts that size may be less of a factor for tech firms willing to pay for power.

Eugenio Culurciello, researcher at chipmaker Micron Technology, agrees with Cutress. He told Wired that the processor “will be expensive, but some people will probably use it”.